CondDensityEstimator Hyperparameter Tuning with Optuna

In this notebook, we will use Optuna to tune the hyperparameters of a conditional density estimator (CDE). Optuna is a framework-agnostic hyperparameter tuning package. Given a range of hyperparameters, it traverses this space in a smart way, exploring parameter values that are more likely to maximize/minimize your objective.

In this notebook, we will also take advantage of an early-stopping protocol, where Optuna looks at the validation loss at each epoch of network training and stops the trial if it is unlikely to beat the best set of hyperparameters found so far.

Before starting, please make sure you have keras and optuna installed in your environment.

[1]:

import numpy as np

from sklearn.model_selection import train_test_split

import visual_bars.generate_visual_bars_data as vbd

from cfl.experiment import Experiment

import optuna

from optuna.integration import TFKerasPruningCallback

from optuna.trial import TrialState

from keras import Sequential

from keras.layers import Dense, Dropout

Load Data

We will be using the visual bars example data for this tutorial. To learn more about this included dataset, please refer to the visual bars background page. If you would like to see a standard example of running CFL on this dataset without hyperparameter tuning, please refer to the main CFL code tutorial.

Here, we create a dataset of 10000 samples, where the cause is a 10x10 image and the effect is a binary 1D variable. For sake of simplicity, we will be tuning a standard feed-forward network (as opposed to a convolutional neural network), so flatten the images down to vectors of shape (1,100).

Since we want to evaluate our models on the same subset of the data across all hyperparameter combinations, we generate in-sample and out-of-sample indices to pass to CFL. If these are not provided, CFL generates this split randomly, and it would look different across every trial.

[2]:

# create visual bars data

n_samples = 20000

im_shape = (10, 10)

noise_lvl= 0.03

random_state = 180

vb_data = vbd.VisualBarsData(n_samples=n_samples, im_shape=im_shape,

noise_lvl=noise_lvl, set_random_seed=random_state)

# retrieve the images and the target

X = vb_data.getImages()

X = np.reshape(X, (X.shape[0], np.product(X.shape[1:]))) # flatten images

Y = vb_data.getTarget()

Y = np.expand_dims(Y, -1)

print(X.shape)

print(Y.shape)

# define train and validation sets to remain constant across tuning

in_sample_idx, out_sample_idx = train_test_split(np.arange(X.shape[0]),

train_size=0.75,

random_state=42)

(20000, 100)

(20000, 1)

Make a CDE network

Next, we need to outline the architecture of our neural network. We use Optuna’s trial.suggest_xxx methods to determine the number of layers, number of units in each layer, and the amount of dropout after each layer in the network. For more information on how to use Optuna to suggest hyperparameters, please refer to their documentation.

[3]:

def build_network(trial):

# vary number of layers

n_layers = trial.suggest_int("n_layers", 1, 5)

model = Sequential()

for i in range(n_layers):

# vary size of each layer

num_hidden = trial.suggest_int("n_units_l{}".format(i), 4, 512, log=True)

model.add(Dense(num_hidden, activation="relu"))

# vary amount of dropout for each layer

dropout = trial.suggest_float("dropout_l{}".format(i), 0.0, 0.5)

model.add(Dropout(rate=dropout))

# add output layer with size of target

model.add(Dense(Y.shape[1], activation="linear"))

return model

Define the tuning objective

Optuna takes in an objective function to perform tuning over. An objective function should, assuming some set of hyperparameters generated by the current optuna trial, 1) build our model of interest, 2) run the model, and 3) evaluate the model and return some metric of how well it did.

Here, we define the parameters for a CFL Experiment that contains one Block - a CDE. Specifically, we use a ‘CondExpDIY’ CDE because 1) we want to estimate the conditional expectation of P(Y|X) instead of the full distribution for computational esse, and 2) we want to provide our own model (defined above) instead of using a pre-defined model that CFL supplies.

Note that some of the parameters in CDE_params (‘lr’ and ‘batch_size’) are also defined by Optuna trial.suggest_xxx methods and will be optimized in the same way as the network architecutre parameters.

Lastly, once the CDE has been trained, we pull the final validation loss from the packaged cfl results and return it as our evaluation of the current set of hyperparameters.

[16]:

def objective(trial):

# define the parameters for a CFL Experiment

data_info = {'X_dims': X.shape, 'Y_dims': Y.shape, 'Y_type': 'categorical'}

CDE_params = {

'model' : 'CondExpDIY',

'model_params' : {

'optuna_callback' : TFKerasPruningCallback(trial, "val_loss"),

'optuna_trial' : trial,

'build_network' : build_network,

'batch_size' : trial.suggest_int("batch_size", 16, 128),

'n_epochs' : 100,

'optimizer' : 'adam',

'opt_config' : {'lr' : trial.suggest_float("lr", 1e-5, 1e-3, log=True)},

'loss' : 'mean_squared_error',

'best' : True,

'verbose' : 0,

'early_stopping' : True,

}

}

block_names = ['CondDensityEstimator']

block_params = [CDE_params]

my_exp = Experiment(X_train=X,

Y_train=Y,

in_sample_idx=in_sample_idx,

out_sample_idx=out_sample_idx,

data_info=data_info,

block_names=block_names,

block_params=block_params,

verbose=0,

results_path=None) # we don't want to save 100's of results

train_results = my_exp.train()

score = train_results['CondDensityEstimator']['val_loss'][-1]

return score

Run an Optuna study

Finally, we are ready to optimize our hyperparameters. We first create a ‘study’ which will minimize the score returned by objective above, and will prune training during trials where the validation loss isn’t dropping at a promising rate.

We call study.optimize to try 4 different samples of hyperparameters from the space we described with the trial.suggest_xxx methods. In practice, you will want to try many more hyperparameter combinations (which you can set through the n_trials argument below).

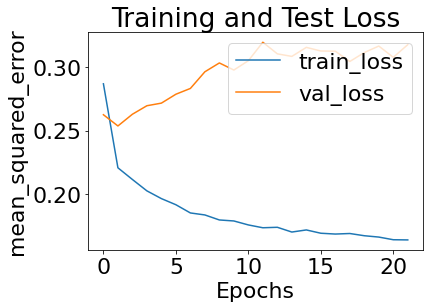

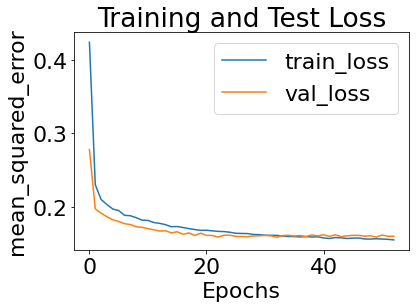

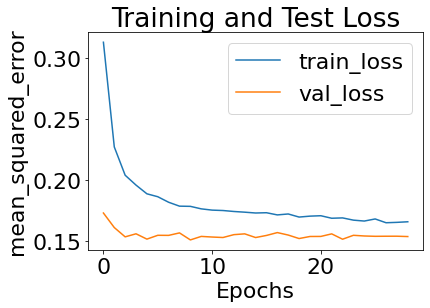

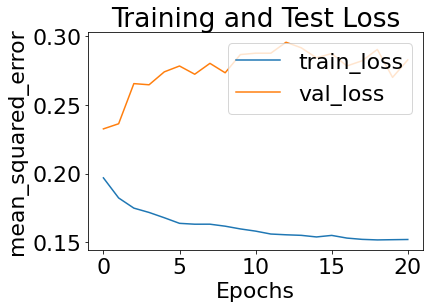

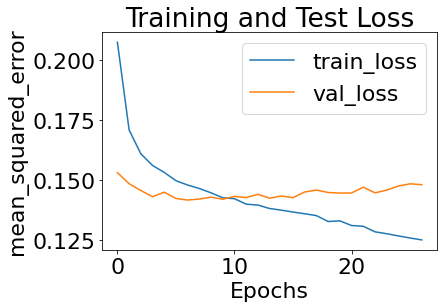

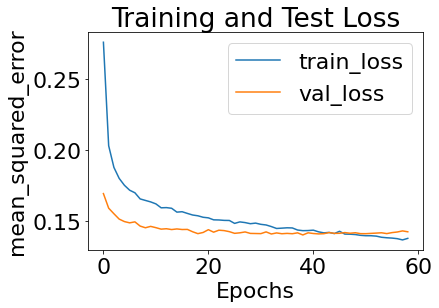

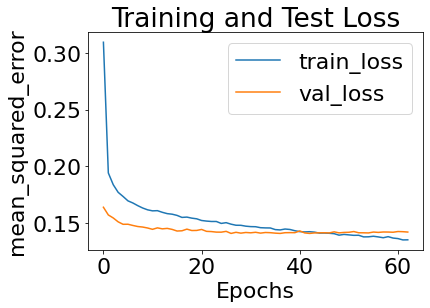

By setting show_plot to True in CDE_params, we can visualize how the loss varies across different hyperparameter combinations.

[17]:

study = optuna.create_study(direction="minimize", pruner=optuna.pruners.MedianPruner())

study.optimize(objective, n_trials=10)

[I 2022-04-01 15:43:06,847] A new study created in memory with name: no-name-d8aa3c6d-62dc-47ae-91ac-aee562ace5b9

Block: verbose not specified in input, defaulting to 1

WARNING:tensorflow:AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a4c2a95f0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a4c2a95f0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING:tensorflow:AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0xf16b61ef0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0xf16b61ef0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING:tensorflow:AutoGraph could not transform <function Model.make_predict_function.<locals>.predict_function at 0x1a4e4b9440> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_predict_function.<locals>.predict_function at 0x1a4e4b9440> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

[I 2022-04-01 15:43:47,458] Trial 0 finished with value: 0.3172536790370941 and parameters: {'batch_size': 23, 'lr': 0.00010775525177725577, 'n_layers': 5, 'n_units_l0': 11, 'dropout_l0': 0.3903148662273171, 'n_units_l1': 181, 'dropout_l1': 0.11695978734553025, 'n_units_l2': 195, 'dropout_l2': 0.44569404315026095, 'n_units_l3': 4, 'dropout_l3': 0.46439788790141745, 'n_units_l4': 92, 'dropout_l4': 0.08699934386147978}. Best is trial 0 with value: 0.3172536790370941.

Block: verbose not specified in input, defaulting to 1

WARNING:tensorflow:AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a4c9b3dd0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a4c9b3dd0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING:tensorflow:AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x1a4ca09320> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x1a4ca09320> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING:tensorflow:AutoGraph could not transform <function Model.make_predict_function.<locals>.predict_function at 0x1a4c4aecb0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_predict_function.<locals>.predict_function at 0x1a4c4aecb0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

[I 2022-04-01 15:44:29,006] Trial 1 finished with value: 0.1596677303314209 and parameters: {'batch_size': 108, 'lr': 2.1381673250042515e-05, 'n_layers': 5, 'n_units_l0': 17, 'dropout_l0': 0.04523916844676085, 'n_units_l1': 222, 'dropout_l1': 0.14298986554926724, 'n_units_l2': 129, 'dropout_l2': 0.10457664459125571, 'n_units_l3': 180, 'dropout_l3': 0.2014939561454271, 'n_units_l4': 193, 'dropout_l4': 0.24951141600328575}. Best is trial 1 with value: 0.1596677303314209.

Block: verbose not specified in input, defaulting to 1

WARNING:tensorflow:AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x10b935560> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x10b935560> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING:tensorflow:AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x10a93e200> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x10a93e200> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING:tensorflow:AutoGraph could not transform <function Model.make_predict_function.<locals>.predict_function at 0x10a93e830> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_predict_function.<locals>.predict_function at 0x10a93e830> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

[I 2022-04-01 15:44:54,499] Trial 2 finished with value: 0.1538044661283493 and parameters: {'batch_size': 44, 'lr': 0.00028887978890040927, 'n_layers': 3, 'n_units_l0': 266, 'dropout_l0': 0.27350843620215715, 'n_units_l1': 7, 'dropout_l1': 0.240795868909433, 'n_units_l2': 10, 'dropout_l2': 0.2586753115734922}. Best is trial 2 with value: 0.1538044661283493.

Block: verbose not specified in input, defaulting to 1

WARNING:tensorflow:AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a4f4558c0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a4f4558c0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING:tensorflow:AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x1a4f3a4950> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x1a4f3a4950> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING:tensorflow:AutoGraph could not transform <function Model.make_predict_function.<locals>.predict_function at 0x1a4d3e69e0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_predict_function.<locals>.predict_function at 0x1a4d3e69e0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

[I 2022-04-01 15:45:30,844] Trial 3 finished with value: 0.2826230823993683 and parameters: {'batch_size': 24, 'lr': 9.12116430942231e-05, 'n_layers': 4, 'n_units_l0': 60, 'dropout_l0': 0.3345292372662158, 'n_units_l1': 142, 'dropout_l1': 0.37366260933900974, 'n_units_l2': 152, 'dropout_l2': 0.23042070465277703, 'n_units_l3': 385, 'dropout_l3': 0.2786944105090963}. Best is trial 2 with value: 0.1538044661283493.

Block: verbose not specified in input, defaulting to 1

WARNING:tensorflow:AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a4c97b440> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a4c97b440> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING:tensorflow:AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x1088197a0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x1088197a0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING:tensorflow:AutoGraph could not transform <function Model.make_predict_function.<locals>.predict_function at 0x1a4fdfcdd0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_predict_function.<locals>.predict_function at 0x1a4fdfcdd0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

[I 2022-04-01 15:45:49,520] Trial 4 finished with value: 0.14809727668762207 and parameters: {'batch_size': 63, 'lr': 0.000354432971720819, 'n_layers': 3, 'n_units_l0': 93, 'dropout_l0': 0.022670093448453554, 'n_units_l1': 8, 'dropout_l1': 0.06405717592558907, 'n_units_l2': 42, 'dropout_l2': 0.19487686263996457}. Best is trial 4 with value: 0.14809727668762207.

Block: verbose not specified in input, defaulting to 1

WARNING:tensorflow:AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a4c3ff680> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a4c3ff680> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING:tensorflow:AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x108819cb0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x108819cb0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING:tensorflow:AutoGraph could not transform <function Model.make_predict_function.<locals>.predict_function at 0x108819950> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_predict_function.<locals>.predict_function at 0x108819950> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

[I 2022-04-01 15:46:22,849] Trial 5 finished with value: 0.14239360392093658 and parameters: {'batch_size': 50, 'lr': 0.00014083659085561683, 'n_layers': 2, 'n_units_l0': 80, 'dropout_l0': 0.21122109285535867, 'n_units_l1': 37, 'dropout_l1': 0.2252598778382316}. Best is trial 5 with value: 0.14239360392093658.

Block: verbose not specified in input, defaulting to 1

WARNING:tensorflow:AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a4e896440> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a4e896440> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING:tensorflow:AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x1a4f372680> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x1a4f372680> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

[I 2022-04-01 15:46:26,013] Trial 6 pruned. Trial was pruned at epoch 0.

Block: verbose not specified in input, defaulting to 1

WARNING:tensorflow:AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a4c4e67a0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a4c4e67a0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING:tensorflow:AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x1a4c559950> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x1a4c559950> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

[I 2022-04-01 15:46:27,296] Trial 7 pruned. Trial was pruned at epoch 0.

Block: verbose not specified in input, defaulting to 1

WARNING:tensorflow:AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a4d39fd40> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a4d39fd40> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING:tensorflow:AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x1a4e92b440> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x1a4e92b440> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

[I 2022-04-01 15:46:29,354] Trial 8 pruned. Trial was pruned at epoch 0.

Block: verbose not specified in input, defaulting to 1

WARNING:tensorflow:AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a4efa1c20> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a4efa1c20> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING:tensorflow:AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x1a4f292290> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x1a4f292290> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

[I 2022-04-01 15:46:32,370] Trial 9 pruned. Trial was pruned at epoch 1.

Print out study statistics and optimal hyperparameters found

[18]:

pruned_trials = study.get_trials(deepcopy=False, states=[TrialState.PRUNED])

complete_trials = study.get_trials(deepcopy=False, states=[TrialState.COMPLETE])

print("Study statistics: ")

print(" Number of finished trials: ", len(study.trials))

print(" Number of pruned trials: ", len(pruned_trials))

print(" Number of complete trials: ", len(complete_trials))

print("Best trial:")

trial = study.best_trial

print(" Value: ", trial.value)

print(" Params: ")

for key, value in trial.params.items():

print(" {}: {}".format(key, value))

Study statistics:

Number of finished trials: 10

Number of pruned trials: 4

Number of complete trials: 6

Best trial:

Value: 0.14239360392093658

Params:

batch_size: 50

lr: 0.00014083659085561683

n_layers: 2

n_units_l0: 80

dropout_l0: 0.21122109285535867

n_units_l1: 37

dropout_l1: 0.2252598778382316

Rerun CFL with the optimized hyperparameters

Now, we run CFL with a CDE that is parameterized by the optimal hyperparameters printed out in the previous cell, and a CauseClusterer that uses the expected conditional probability estimated in the previous step to construct macrovariable states.

[20]:

# so our optimized model becomes:

def build_optimized_model():

# vary number of layers

n_layers = study.best_trial.params['n_layers']

model = Sequential()

for i in range(n_layers):

# vary size of each layer

num_hidden = study.best_trial.params[f'n_units_l{i}']

model.add(Dense(num_hidden, activation="relu"))

# vary amount of dropout for each layer

dropout = study.best_trial.params[f'dropout_l{i}']

model.add(Dropout(rate=dropout))

# add output layer with size of target

model.add(Dense(Y.shape[1], activation="linear"))

return model

data_info = {'X_dims': X.shape, 'Y_dims': Y.shape, 'Y_type': 'categorical'}

CDE_params = {

'model' : 'CondExpDIY',

'model_params' : {

'build_network' : build_optimized_model,

'batch_size' : study.best_trial.params['batch_size'],

'n_epochs' : 100,

'optimizer' : 'adam',

'opt_config' : {'lr' : study.best_trial.params['lr']},

'loss' : 'mean_squared_error',

'best' : True,

'verbose' : 0,

'early_stopping' : True

}

}

cause_cluster_params = {'model' : 'KMeans',

'model_params' : {'n_clusters' : 4},

'verbose' : 0}

block_names = ['CondDensityEstimator', 'CauseClusterer']

block_params = [CDE_params, cause_cluster_params]

my_exp = Experiment(X_train=X, Y_train=Y, data_info=data_info,

in_sample_idx=in_sample_idx, out_sample_idx=out_sample_idx,

block_names=block_names, block_params=block_params,

results_path='demo_results')

train_results = my_exp.train()

All results from this run will be saved to demo_results/experiment0003

Block: verbose not specified in input, defaulting to 1

#################### Beginning CFL Experiment training. ####################

Beginning CondDensityEstimator training...

WARNING:tensorflow:AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a54916d40> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_train_function.<locals>.train_function at 0x1a54916d40> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING:tensorflow:AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x1a54cb10e0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_test_function.<locals>.test_function at 0x1a54cb10e0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING:tensorflow:AutoGraph could not transform <function Model.make_predict_function.<locals>.predict_function at 0x1a549625f0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

WARNING: AutoGraph could not transform <function Model.make_predict_function.<locals>.predict_function at 0x1a549625f0> and will run it as-is.

Please report this to the TensorFlow team. When filing the bug, set the verbosity to 10 (on Linux, `export AUTOGRAPH_VERBOSITY=10`) and attach the full output.

Cause: 'arguments' object has no attribute 'posonlyargs'

To silence this warning, decorate the function with @tf.autograph.experimental.do_not_convert

CondDensityEstimator training complete.

Beginning CauseClusterer training...

CauseClusterer training complete.

Experiment training complete.

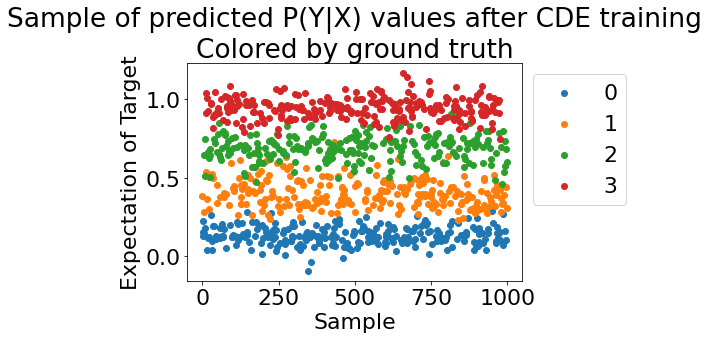

Visualize the conditional probability learned by CFL with the optimized hyperparameters

[21]:

from cfl.visualization.cde_diagnostic import pyx_scatter

pyx_scatter(my_exp, vb_data.getGroundTruth())

[21]:

(<Figure size 432x288 with 1 Axes>,

<AxesSubplot:title={'center':'Sample of predicted P(Y|X) values after CDE training\nColored by ground truth'}, xlabel='Sample', ylabel='Expectation of Target'>)

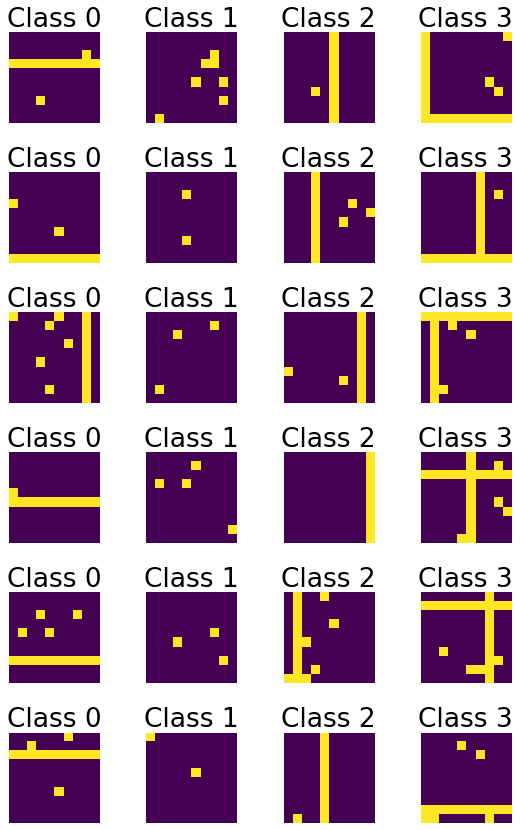

Visualize the macrovariable states that CFL finds with this set of hyperparameters

[22]:

import visual_bars.visual_bars_vis as vis

vis.viewImagesAndLabels(X, im_shape=im_shape, n_examples=6,

x_lbls=train_results['CauseClusterer']['x_lbls'])

[ ]:

[ ]: